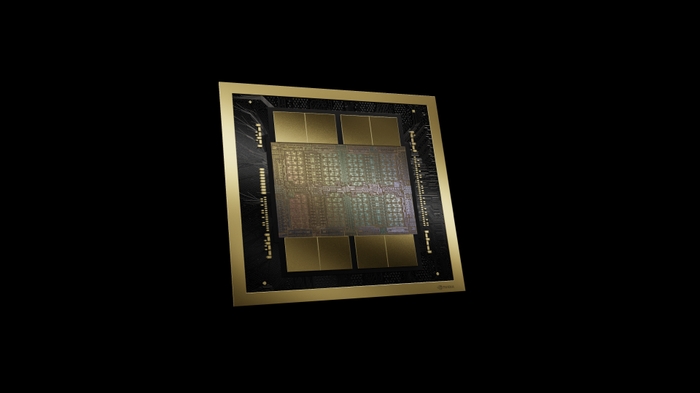

NVIDIA HGX B300 &B200 Servers

8-GPU NVLink servers for large-scale AI training. Up to 2.3TB of HBM3e memory, 800Gbps InfiniBand networking, and NVLink interconnect delivering unprecedented GPU-to-GPU bandwidth for training the largest AI models.

What is NVIDIA HGX?

NVIDIA HGX is the reference architecture for 8-GPU servers purpose-built for large-scale AI training. Unlike standard multi-GPU servers where GPUs communicate over PCIe, HGX systems use NVIDIA NVLink to create a unified GPU fabric with massive bandwidth between all 8 GPUs.

This means your training jobs scale nearly linearly across all 8 GPUs, eliminating the communication bottleneck that limits standard server configurations. Combined with InfiniBand networking, HGX servers form the building blocks of the world's most powerful AI supercomputers.

NVLink 5th Generation

Up to 1.8 TB/s bidirectional bandwidth between GPUs

Unified GPU Memory

Up to 2.3TB of HBM3e shared across all 8 GPUs

800Gbps InfiniBand

Scale to multi-node clusters with ultra-low latency

HGX Server Configurations

Five configurations spanning three GPU generations. Every system is built to order.

NVIDIA HGX B300 Server (AMD EPYC)

Flagship 8-GPU Blackwell Ultra server with AMD EPYC. Maximum memory bandwidth for the largest LLM training.

GPU

8x NVIDIA B300 SXM5

288GB HBM3e each

CPU

Dual AMD EPYC 9005/9004

System Memory

24x DDR5 ECC

Up to 3TB

GPU Memory

2.3TB HBM3e

Storage

12x 2.5" NVMe

Networking

8x 800Gbps IB

+ 2x 1GbE

NVIDIA HGX B300 Server (Intel Xeon)

Blackwell Ultra with Intel Xeon 6700-series. Ideal for Intel-standardized organizations with maximum memory capacity.

GPU

8x NVIDIA B300 SXM5

288GB HBM3e each

CPU

Dual Intel Xeon 6700P/6700E

System Memory

32x DDR5 ECC

Up to 4TB

GPU Memory

2.3TB HBM3e

Storage

8x 2.5" NVMe

Networking

8x 800Gbps IB

+ 1x 10GbE

NVIDIA HGX B200 Server (AMD EPYC)

Blackwell with AMD EPYC. The performance sweet spot for enterprise AI training.

GPU

8x NVIDIA B200 SXM5

192GB HBM3e each

CPU

Dual AMD EPYC 9005/9004

GPU Memory

1.5TB HBM3e

System Memory

24x DDR5 ECC (3TB)

Storage

12x 2.5" NVMe

Networking

InfiniBand + 2x 1GbE

NVIDIA HGX B200 Server (Intel Xeon)

Blackwell GPUs with Intel Xeon Scalable. Compatible with existing Intel datacenter tooling.

GPU

8x NVIDIA B200 SXM5

192GB HBM3e each

CPU

Dual Intel Xeon Scalable

GPU Memory

1.5TB HBM3e

System Memory

24x DDR5 ECC (3TB)

Storage

12x 2.5" Hot-Swap

Networking

InfiniBand + 2x 1GbE

NVIDIA HGX H200 Server (AMD EPYC)

Proven Hopper architecture with HBM3e. Best value entry point into 8-GPU NVLink training with shortest lead times.

GPU

8x NVIDIA H200 SXM5

141GB HBM3e each

CPU

Dual AMD EPYC 9005/9004

GPU Memory

1.1TB HBM3e

System Memory

24x DDR5 ECC (3TB)

Storage

12x 2.5" NVMe

Networking

InfiniBand + 2x 1GbE

GPU Generation Comparison

Understanding B300 vs B200 vs H200 helps you match the right GPU to your workload and budget.

| Specification | B300 Blackwell Ultra | B200 Blackwell | H200 Hopper |

|---|---|---|---|

| Memory per GPU | 288GB HBM3e | 192GB HBM3e | 141GB HBM3e |

| Total GPU Memory (8x) | 2.3TB | 1.5TB | 1.1TB |

| Memory Bandwidth | 8 TB/s per GPU | 8 TB/s per GPU | 4.8 TB/s per GPU |

| NVLink Generation | 5th Gen | 5th Gen | 4th Gen |

| NVLink Bandwidth | 1.8 TB/s | 1.8 TB/s | 900 GB/s |

| InfiniBand | 800Gbps (NDR800) | 800Gbps (NDR800) | 400Gbps (NDR400) |

| Best For | Largest LLMs, frontier models | Enterprise AI, large models | Proven workloads, best value |

| Starting Price (8-GPU) | $485,918 | $394,707 | $320,232 |

Built for the Most Demanding AI Workloads

HGX servers power the world's largest AI training runs.

LLM Training

Train large language models with billions of parameters. Fine-tune foundation models on proprietary data.

Multi-Modal AI

Train models that process text, images, video, and audio simultaneously.

Scientific Computing

Molecular dynamics, climate modeling, drug discovery, and genomics research.

Financial Modeling

Risk analysis, quantitative trading models, fraud detection, and real-time financial simulations.

White-Glove Deployment & Support

A $400K+ server deserves expert deployment. PTG handles every detail.

Site Assessment

Power capacity analysis, cooling evaluation, rack space planning, and network infrastructure review.

Rack Installation

Professional mounting, cabling, power distribution by certified technicians. On-site in Raleigh-Durham.

Software Stack

NVIDIA AI Enterprise, CUDA, cuDNN, NCCL, PyTorch, TensorFlow, container runtimes, and monitoring.

Network Architecture

InfiniBand fabric design, switch configuration, and multi-node cluster networking.

Compliance-Ready

CMMC, HIPAA, and NIST 800-171 compliant deployments by our CMMC-RP certified team.

Ongoing Support

24/7 monitoring, proactive maintenance, firmware updates, and performance optimization.

Frequently Asked Questions

What power and cooling do HGX servers require?

What is the difference between B300, B200, and H200?

Can I start with H200 and upgrade to Blackwell later?

What networking do I need for multi-node training?

What software comes pre-installed?

How long is the delivery lead time?

Does PTG offer financing?

Explore More Hardware

Ready to Build Your AI Training Infrastructure?

Our CMMC-RP certified team will help you select the right HGX configuration, design your network architecture, and deliver a production-ready AI training platform.